-

Notifications

You must be signed in to change notification settings - Fork 9

The --n-workers setting shows odd behavior. #5

Copy link

Copy link

Open

Description

I'm running a train command locally.

python -m spacy ray train --n-workers 4 ...

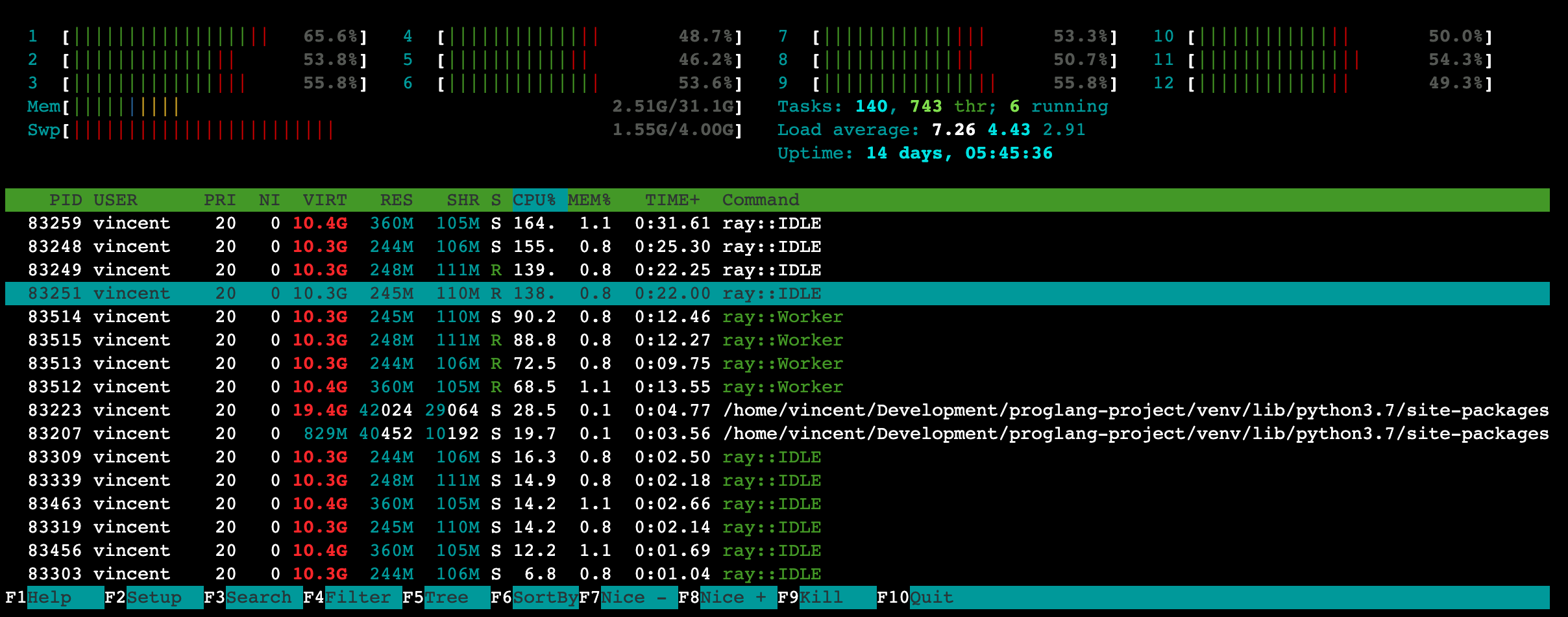

Here's the results from htop.

My machine isn't running anything else but it seems like 12 threads are spinning up. I'm also seeing a lot of ray::IDLE so I'm wondering if something strange is happening. At the same time I'm also not seeing any learning happening from the train logs.

(venv) ➜ proglang-project git:(main) ✗ python -m spacy project run train

=================================== train ===================================

Running command: /home/vincent/Development/proglang-project/venv/bin/python -m spacy ray train --n-workers 4 configs/config.cfg --output training/ --paths.train corpus/stack-overflow-labels-train.spacy --paths.dev corpus/stack-overflow-labels-train.spacy

ℹ Using CPU

2021-03-02 22:07:33,929 INFO resource_spec.py:231 -- Starting Ray with 17.87 GiB memory available for workers and up to 8.94 GiB for objects. You can adjust these settings with ray.init(memory=<bytes>, object_store_memory=<bytes>).

2021-03-02 22:07:34,299 INFO services.py:1193 -- View the Ray dashboard at localhost:8265

(pid=83259) E # LOSS TOK2VEC LOSS NER ENTS_F ENTS_P ENTS_R SCORE

(pid=83259) --- ------ ------------ -------- ------ ------ ------ ------

(pid=83259) 0 0 0.00 44.50 0.00 0.00 0.00 0.00

(pid=83259) 0 200 0.00 9754.83 0.00 0.00 0.00 0.00

(pid=83259) 2 400 0.00 12038.00 0.00 0.00 0.00 0.00

(pid=83259) 3 600 0.00 14772.00 0.00 0.00 0.00 0.00

(pid=83259) 5 800 0.00 18170.17 0.00 0.00 0.00 0.00

(pid=83259) 7 1000 0.00 22302.00 0.00 0.00 0.00 0.00

(pid=83259) 9 1200 0.00 27441.83 0.00 0.00 0.00 0.00

(pid=83259) 16 1600 0.00 40972.67 0.00 0.00 0.00 0.00

(pid=83259) 21 1800 0.00 49929.84 0.00 0.00 0.00 0.00

Not much learning happening. Here's the same run but without the ray integration.

=================================== train ===================================

Running command: /home/vincent/Development/proglang-project/venv/bin/python -m spacy train configs/config.cfg --output training/ --paths.train corpus/stack-overflow-labels-train.spacy --paths.dev corpus/stack-overflow-labels-train.spacy

ℹ Using CPU

=========================== Initializing pipeline ===========================

Set up nlp object from config

Pipeline: ['tok2vec', 'ner']

Created vocabulary

Finished initializing nlp object

Initialized pipeline components: ['tok2vec', 'ner']

✔ Initialized pipeline

============================= Training pipeline =============================

ℹ Pipeline: ['tok2vec', 'ner']

ℹ Initial learn rate: 0.0

E # LOSS TOK2VEC LOSS NER ENTS_F ENTS_P ENTS_R SCORE

--- ------ ------------ -------- ------ ------ ------ ------

0 0 0.00 44.50 0.00 0.00 0.00 0.00

0 200 9.67 15358.64 0.00 0.00 0.00 0.00

2 400 45.78 3916.13 67.20 92.93 52.63 0.67

3 600 70.91 1395.79 92.36 93.29 91.45 0.92

5 800 93.66 831.71 93.79 94.11 93.47 0.94

7 1000 108.12 759.16 95.15 95.38 94.91 0.95

Something feels strange about the training loop with ray, so I figured I'd report it.

Here's my spaCy info.

============================== Info about spaCy ==============================

spaCy version 3.0.3

Location /home/vincent/Development/proglang-project/venv/lib/python3.7/site-packages/spacy

Platform Linux-5.8.0-7642-generic-x86_64-with-Pop-20.10-groovy

Python version 3.7.9

Pipelines

Reactions are currently unavailable

Metadata

Metadata

Assignees

Labels

No labels